Ethics in AI & R&D: Innovation with Accountability

Artificial intelligence (AI) is revolutionizing research and development (R&D). It’s providing new ways to analyze data, discover patterns, and develop innovative solutions to complex problems.

In this article, we’ll explore the ethics of AI in R&D, and how to balance innovation with accountability.

The Role of AI in R&D

AI is transforming many industries, from healthcare to finance to energy. In healthcare, uses for AI include drug discovery and development, and medical imaging analysis.

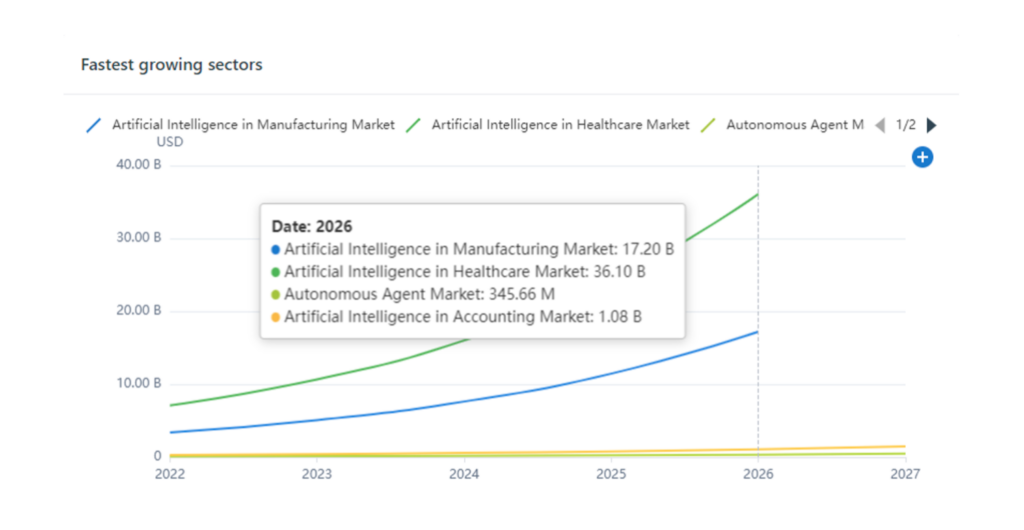

As the graph above illustrates, AI in healthcare is the fastest-growing sector in the AI market. This isn’t surprising, given AI improves diagnostics, treatment, accuracy, outcomes, and quality of care.

In finance, AI analyzes financial data to make more accurate market predictions. And in energy, AI helps to optimize power grid operations and improve the efficiency of energy production. These are just a few examples of the many ways AI is transforming R&D.

The Benefits of AI in R&D

The use of AI in R&D offers a number of benefits, including the ability to:

- Analyze large datasets quickly and accurately

- Identify patterns that might not be apparent to human researchers

- Develop solutions that are tailored to specific needs.

AI can also help to reduce costs and speed up the R&D process. This, in turn, allows researchers to develop new products and solutions more quickly and efficiently.

The Ethical Questions Raised by AI in R&D

However, the use of AI in R&D also raises a number of ethical questions. For example, who is responsible for the decisions made by an AI system? If AI is used to develop a new drug, who is accountable if the drug has unforeseen side effects? Similarly, if an AI system makes hiring decisions, what happens if the system is biased against certain groups?

Another ethical concern is the potential for AI to be used in harmful ways. For example, AI could be used to develop autonomous weapons or to create surveillance systems that violate people’s privacy rights.

Ethical Considerations in the Use of ChatGPT and Other AI Bots

ChatGPT is a powerful tool that can be used for a wide range of applications, including research and development (R&D). However, the use of bots like ChatGPT in R&D also raises important ethical concerns that must be taken into account.

One ethical concern is the potential for bias in the data used to train the AI model. If the data used to train ChatGPT is biased or incomplete, this can lead to the AI model perpetuating that bias and potentially making inaccurate or unfair predictions. This can have significant ethical implications, particularly if the AI model is being used to make decisions that have a significant impact on people’s lives.

Another ethical concern is the potential for unintended consequences. As AI models like ChatGPT become more powerful and sophisticated, they may be able to generate content or make decisions that are beyond the understanding of their human creators. This can create ethical dilemmas, particularly if the AI model produces content or makes harmful or unethical decisions.

To address these ethical concerns, it’s important to establish clear ethical guidelines for the use of AI models in R&D, including considerations around privacy, data protection, and unintended consequences. Additionally, it’s crucial to regularly evaluate the use of AI models like ChatGPT in R&D to ensure that they are being used ethically and are not having unintended negative impacts on individuals or society as a whole.

Balancing Innovation with Accountability

Balance between innovation and accountability can be achieved through several measures, such as:

- Ensuring that AI systems are transparent and explainable, so that researchers and other stakeholders can understand how decisions are being made.

- Developing standards and guidelines for the use of AI in R&D, to ensure that ethical considerations are taken into account in the design and deployment of AI systems.

- Providing education and training to researchers and other stakeholders about the ethical considerations involved in the use of AI in R&D.

- Ensuring that there is accountability and responsibility for the decisions made by AI systems, whether it is the researchers who develop the system, the users who implement it, or both.

Conclusion

The use of AI in R&D offers significant benefits, but also raises important ethical questions that must be addressed. By balancing innovation with accountability, researchers and other stakeholders can ensure that the benefits of AI are realized without compromising ethical considerations. As AI continues to transform R&D, it will be important to remain vigilant and ensure that AI is used in ways that are both innovative and ethical.